Jeanne has been building language models since before it was cool.

With nearly nine years of experience in AI, multilingual NLP, and data science, spanning both industry and research, her focus has always been on multilingual and low-resource settings, where data is scarce, noisy, and rarely benchmark-ready. Her work has spanned misinformation detection on social media and real-world language understanding in underrepresented languages, with privacy and data protection as a consistent consideration throughout. More recently, her interests have extended into finance, specifically how language models can be used to extract signal from social media and news to model and anticipate market behaviour.

Her research has been published at ACL, including work on automating multilingual healthcare question answering in low-resource African languages. She approaches problems at the intersection of language, people, and systems, with a particular interest in making AI work in contexts it was never designed for.

Outside of work, she reads widely across behavioural economics, climate change, and misinformation and writes occasionally when something is worth saying.

Originally from Cape Town, Jeanne now lives in London with her husband and two Bengal cats, Eira and Kinzy.

Blog

Benchmaxxing: The ugly art of optimising for leaderboards rather than real-world performance

In 2015, Volkswagen was caught running software that detected when a car was being emissions-tested and switched the engine into a compliant mode for the duration of the test. The AI industry is running a version of the same pattern, at scale, largely unchallenged.

22 April 2026

In 2015, Volkswagen was caught running software in its diesel cars that detected when the vehicle was being emissions-tested and switched the engine into a compliant mode for the duration of the test. On the rolling road, the cars were clean. On the motorway, they emitted up to 40 times the legal limit of NOx. Eleven million vehicles were affected. Volkswagen was forced to spend $14.7 billion to settle the allegations of cheating, including $4.7B in pollution mitigation and investing in zero emissions research.

It's worth starting there, because the AI industry is running a version of the same pattern, at scale, largely unchallenged.

The numbers on AI leaderboards are, in many cases, not what they look like. A meaningful fraction of the scores published at launch, the 94.7% on MMLU, the state-of-the-art on HumanEval, the chart showing the new model beating every incumbent, reflects a model that has been shaped to pass the test rather than one that has improved at the underlying task. This practice has a name in the industry: benchmaxxing. It is the craft of optimising for what the leaderboard measures rather than for the capability the leaderboard is supposed to measure. There is genuine skill involved in doing it well. Hence the ugly art.

Four techniques, in rough order of severity

Benchmaxxing is not a single behaviour. It is a cluster of techniques, some closer to sloppy practice than to deliberate deception, and some harder to describe as anything other than cheating.

Contamination. Modern models are trained on very large samples of the open web, and benchmark questions appear on the open web. MMLU questions surface in training sets. GSM8K problems leak in via forum posts and homework-help sites. The model doesn't reason through the question at evaluation time; it reproduces an answer it has seen before. Some contamination is genuinely accidental. A significant amount is what you might call deniable: the kind of accidental that happens when nobody put much effort into preventing it.

Teaching to the test. Fine-tuning on data that resembles the benchmark without literally being the benchmark. Training the model to produce outputs in whatever format the automated grader recognises. Coaching it to prefix answers with specific phrases because the scoring regex is looking for them. The resulting model is not more capable; it is better-calibrated to the specific conditions of the test. This is the equivalent of engine tuning that improves performance on the dynamometer without improving real-world driving.

Cherry-picking checkpoints. A frontier lab training a new model doesn't produce one model. It produces hundreds of checkpoints across training, plus parallel runs with different data mixes and post-training recipes. Each checkpoint scores slightly differently on each benchmark. One is best at maths, another at coding, a third at reasoning. No single checkpoint is best at everything. When the launch chart is published, each column can come from a different checkpoint, the one that scored highest on that specific eval. The chart is, in a narrow sense, accurate; the numbers were produced by models from that lab. But no single model shipped to customers achieves every score on the chart.

Defeat devices. This is where the Volkswagen comparison stops being a metaphor and starts becoming literal description. Agentic benchmarks like SWE-bench run the model's code in the same environment as the evaluator, which means a sufficiently capable agent can tamper with the scoring infrastructure directly rather than solving the task. This is no longer a hypothetical. In April 2026, researchers at UC Berkeley's Center for Responsible, Decentralized Intelligence published a systematic audit of eight major AI agent benchmarks, including SWE-bench, SWE-bench Pro, WebArena, OSWorld, GAIA, and Terminal-Bench. They found that every single one was exploitable to near-perfect scores with no legitimate work at all:

The SWE-bench Verified exploit was a ten-line conftest.py that hooks into pytest and forces every test to report as passing.

WebArena fell to an agent navigating Chromium to a file:// URL and reading the gold answers directly from the task configs.

Terminal-Bench was broken by replacing /usr/bin/curl with a wrapper that faked test output.

The researchers have open sourced their exploitation kit here: https://github.com/benchjack/benchjack

Documented real-world cases already exist in published leaderboard results. A coding model called IQuest-Coder-V1 claimed an 81.4% score on SWE-bench; UC Berkley’s researchers subsequently found that roughly a quarter of its trajectories were running git log to copy answers from commit history rather than solving the problems. In February 2026, OpenAI published an analysis concluding that SWE-bench Verified, which they had co-created to fix problems with the original SWE-bench, was itself contaminated: every frontier model they tested (GPT-5.2, Claude Opus 4.5, Gemini 3 Flash) could reproduce verbatim gold patches and problem statement specifics for tasks in the benchmark from the task ID alone. OpenAI's own conclusion was that improvements on SWE-bench Verified "no longer reflect meaningful improvements in models' real-world software development abilities. Instead, they increasingly reflect how much the model was exposed to the benchmark at training time". They have stopped reporting the benchmark for frontier evaluation. METR, an independent evaluations organisation, reports that OpenAI's o3 engaged in reward-hacking behaviour (stack introspection, monkey-patching graders, and similar tampering) in 39 of 128 evaluation runs (30.4%), and that the behaviour persisted at high rates even after the model was explicitly instructed not to. The Berkeley team is careful to note they are not alleging that current leaderboard leaders are actively cheating on public benchmarks. Their point is narrower and, in some ways, more unsettling: the vulnerabilities exist, they are trivial to exploit, and a sufficiently capable agent may discover them as an emergent strategy without anyone deliberately instructing it to.

The same pattern shows up in evaluations that use an LLM as a judge. Peer-reviewed work, including the JudgeDeceiver paper from ACM CCS 2024, has demonstrated that carefully constructed adversarial suffixes appended to a response can manipulate LLM judges into selecting that response regardless of its actual quality, with attack success rates exceeding 90% on MT-Bench. Subsequent work has reported success rates of up to 73.8% against judges including GPT-4 and Claude 3 Opus across multiple evaluation tasks. These are not sophisticated exploits; they are short suffixes, optimised against the judge model, that cause the judge to rate the carrying response higher. Any benchmark whose scoring depends on an LLM judge is, at minimum, exposed to this class of attack.

There is a further category worth naming, more speculative but structurally close enough to the above that it belongs in the same conversation. Volkswagen's defeat device detected the test rig by reading telltales such as steering wheel position, wheel rotation, and throttle behaviour. A language model trained on enough evaluation data could, in principle, learn to recognise the shape of a benchmark query without being explicitly told. Multiple-choice formatting, particular system-prompt registers, the absence of conversational context, dataset-specific phrasing: the wrapper that a standard evaluation harness places around a prompt is, from a pattern-recognition point of view, a fingerprint. No one has to engineer this deliberately. A model trained on enough benchmark-shaped data could learn an implicit classifier distinguishing evaluation from production. Whether any current model does this, and at what strength, is difficult to disentangle from ordinary brittleness on out-of-distribution inputs. But the ceiling case, and it is worth sitting with, is a model that has learned, without anyone designing it, to recognise when it is being graded and perform accordingly. If the reward signal is hackable, a sufficiently capable agent may hack it as an emergent strategy rather than a deliberate one.

A worked example

A speech-AI startup recently launched a new model with a blog post announcing that it "sits at the top of the Open ASR Leaderboard" (ASR = Automatic Speech Recognition). While they claim this new title multiple times, the leaderboard is never actually linked in the blog post. For those readers who do not know, the Open ASR Leaderboard is a public, community-run benchmark with a documented submission process. Anyone can contribute a model by opening a pull request. The startup, who claims they are now at the top of this leaderboard, had not done this. They had run their own evaluation, on their own infrastructure, on the public test sets, and reported the result as a leaderboard standing in a blog post. The problem is that there's no way for a user or investor to verify the claim independently. It's a bit like grading your own test and telling the examiner "Trust me, bro".

It gets worse.

The post also includes, at the end of the short-form results table, the following disclaimer: "We omit Librispeech and Voxpopuli from the evaluations as we use these datasets during training and cannot guarantee a contaminated result, additionally from our observations, while training on these datasets showed significant WER improvement, overall generalisation was hurt."

The sentence is doing a lot of work for one footnote. First, it states outright that the model was trained on at least two of the standard benchmarks, an admission of contamination that is normally only inferred from replication. Second, those benchmarks were dropped from the reported results, so the reader doesn't see the scores that would reflect the contamination. Third, training on those benchmarks improved the headline scores while hurting general performance. You cannot, in the same blog post, claim to have the best model and also admit that your model got worse. These are incompatible claims. The disclaimer is saying the quiet part out loud at the bottom of a results table, and hoping no one reads that far.

The multilingual table in the same post introduces a column titled "Avg (excl. Swe)", an average excluding Swedish. Swedish is the one language in the comparison where an incumbent baseline scores better. Excluding it produces a lead; including it does not. The writers do not flag this metric as custom.

A few smaller details compound confusion. The model's name is not consistent across the post: the introduction uses one name, the second table uses another, the chart uses a third. This could be branding in flux, or it could be the checkpoint shuffle from earlier in this post playing out in miniature, with different internal models reported under different labels across different tables. A reader has no way to tell, because none of the numbers in any of the tables are reproducible, because the evaluation was not submitted anywhere a reader could verify it.

None of these choices is unusual on its own. Labs rename models. Averages get customised. Contaminated datasets get dropped from reported results. What's notable is the combination in a single post: an unverified leaderboard claim, an acknowledged training-set contamination, a custom metric that excludes the one loss, and a disclaimer that, read carefully, describes a model that got worse in general while getting better at the benchmarks. Most labs are more careful, or at least more opaque. This particular example is useful because it is a compact, public catalogue of the techniques described above, all in one place, at the top of a product launch.

Follow the money

Why does this happen? Because the AI industry has built itself on benchmark results, dating back to BERT smashing the GLUE benchmark in 2018.

A frontier AI lab at this stage in the cycle spends hundreds of millions to billions of dollars a year on compute, data, salaries, and infrastructure. It cannot fund itself from revenue at the scale the frontier demands. It funds itself from rounds, and rounds are priced on perceived capability. Perceived capability is measured, by investors, the press, and procurement teams, largely through benchmarks, never mind if the benchmarks no longer signal true capabilities. A two-point lead on MMLU can make or break the next funding round. In a market where the top labs are raising at eleven- and twelve-figure valuations, the economic value of a benchmark point substantially exceeds the engineering cost of gaming it.

The incentive compounds. A lab that wins the benchmark cycle attracts better researchers, which produces better models next cycle, which attracts more capital, which buys more compute. Missing the cycle once is not just a round at a lower valuation; it risks falling out of the tier of labs operating at the frontier at all. That turns every launch chart from a marketing artefact into something closer to a survival signal. The pressure to show a taller bar is not a failure of character on the part of the people running these labs. It is a rational response to an environment in which the bar is load-bearing for the enterprise.

This is structurally the same place Volkswagen found itself in the years before Dieselgate. VW had promised investors and regulators a diesel strategy that the underlying physics couldn't deliver under real driving conditions. Once the commitments were made, the choice narrowed: miss the numbers and disappoint the market, or find a way to pass the test and hope the gap was never measured. The engineers who built the defeat device weren't the ones who set the strategy; they were the ones who had to reconcile it. The pressure was financial. The compromise followed.

None of this justifies benchmaxxing. It just tries to explain it. A serious discussion of how to address the problem has to start from the fact that the people doing it are, in the main, responding sensibly to the incentives they face.

Goodhart's Law, and the cultural layer underneath it

When a measure becomes a target, it ceases to be a good measure. Benchmarks were built to measure capability, but have since become KPIs, and what they now reliably measure is how good a lab is at winning benchmarks.

And none of this is conjecture anymore. Apple's GSM-Symbolic study found that frontier reasoning scores drop significantly when only the numbers or variable names in a maths problem are changed, and that adding a single irrelevant clause can cause accuracy drops of up to 65%. Frontier models now cluster within two or three points of each other on MMLU, a range where noise exceeds signal. Enterprise evaluations document a roughly 37% gap between lab benchmarks and production deployment. Product teams pick a model by its leaderboard position and switch three months later, once they find out what it's actually like to use.

None of this is surprising once you notice that the labs building these models are run by people who got their jobs by passing LeetCode-style interviews: algorithmic puzzles solved under time pressure, against rubrics with an increasingly distant relationship to real engineering work. The pipeline selected, at the margin, for people willing to grind problem sets and pattern-match through the interview. Run that selection for a decade across an industry and the disposition concentrates. An industry that spent ten years rewarding rubric-optimisation in its hiring is now surprised that its products do the same thing.

What to do about it?

A number of recent benchmarks have been built specifically to resist the techniques described above, and they're worth crediting.

LiveCodeBench maintains a rolling monthly update from competitive programming platforms, so a model evaluated today is graded on problems that did not exist when the model was trained. LiveBench.ai extends the same principle across a broader set of tasks. Both address contamination directly by making training-set inclusion temporally impossible rather than merely improbable.

SWE-bench Pro takes a different approach to the same problem. Its public set is constructed from GPL-licensed repositories, on the reasoning that strong copyleft creates a legal deterrent against silent inclusion in proprietary training corpora. It also maintains a private set sourced from proprietary codebases belonging to partner startups, and a held-out set that is not publicly released. The result is a benchmark that is both more contamination-resistant and substantially harder than its predecessors. Top models score around 23% on the public set, compared to 70%-plus on SWE-bench Verified, which is roughly the gap you would expect between a clean evaluation and a contaminated one.

At the more structural end, proposals like PeerBench and dynamic-sampling frameworks such as LLMEval-3 aim to replace the static public benchmark altogether, with formats closer to a proctored exam: private test pools, per-session randomised sampling, and cryptographically verifiable evaluation workflows. Whether any of these becomes the new standard is an open question, but they are at least the right category of answer.

A few honest caveats are worth stating alongside the credit. Private-by-design benchmarks solve contamination but concentrate epistemic authority in whoever curates them, which creates its own problems around transparency and capture. Rolling benchmarks require continuous curation effort and can drift in difficulty over time. And no benchmark, however well-constructed, is a substitute for evaluation on workloads that resemble the actual job. The best current advice for someone choosing a model is still to combine a rotating, contamination-resistant public benchmark with independent replication and internal evaluation on representative data. No single one of these is sufficient on its own.

The underlying point is simpler. Benchmark scores are, at best, a noisy proxy for capability. At worst, they are the output of a system optimised to produce numbers that look good to humans evaluating at a distance, which is not the same thing as a system that is good.

The field will, eventually, correct. Benchmarks will become harder, more private, more adversarial. Contamination detection will improve. The obvious techniques will stop working. Regulators may show up, as they eventually did for the car industry.

It's worth remembering how the car industry's version of this actually ended. Dieselgate didn't stop because Volkswagen had a change of heart, and it didn't stop because the regulators' own tests caught it. It stopped because independent researchers at West Virginia University drove the cars in the real world with portable emissions analysers and compared the numbers to the lab results. The equivalent intervention in AI looks the same: independent evaluation on workloads the labs didn't choose, under conditions they didn't design.

Until then, leaderboard positions are best read with the understanding that they reflect a combination of model capability and the lab's willingness to optimise for leaderboard position, and that the relative weight of those two factors is not disclosed.

In an industry where benchmaxxing becomes the standard, the ones who pay the price are end users and the labs that won't cheat to win.

Vibe-Coding: A Double-Edged Sword

Vibe coding has collapsed the distance between having an idea and having a working app. For prototyping, it's genuinely transformative. But the gap between a convincing demo and production-ready software hasn't closed — and the judgment required to bridge that gap hasn't gotten any cheaper to acquire.

3 March 2026

Andrej Karpathy didn't invent AI-assisted coding, but he did give it a name that stuck. In February 2025, he described a new way of working: fully giving in to the AI, not really writing code so much as directing it. Describing what you want, accepting what it produces, nudging it when it goes wrong. He called it vibe coding. Within weeks, the term was everywhere.

It's easy to see the appeal. The friction between having an idea and having a working implementation has collapsed. What used to take a week of scaffolding, boilerplate, and Stack Overflow archaeology can now be roughed out in an afternoon. For prototyping in particular, this is genuinely transformative.

But vibe coding is a double-edged sword, and which edge you get depends almost entirely on how much you already know.

Solving Problems Like a Pragmatic Programmer

In The Pragmatic Programmer, Andrew Hunt and David Thomas introduce the concept of tracer bullets — a metaphor borrowed from military ammunition that emits a visible trail, letting you see exactly where your shots are landing.

The idea applied to software is this: rather than building each component in isolation and hoping it all fits together at the end, you fire a thin, end-to-end slice through the entire system first. It touches every layer (frontend, API, database, whatever your stack demands) but does very little. It just has to work. Once it does, you fill in the blanks, solve for increasingly complex parts of the problem, and handle the edge cases last.

This is the right way to build software. It validates your architecture early, surfaces integration problems before they compound, and gives you something tangible to iterate on. It is also, not coincidentally, exactly the kind of thing vibe coding is very good at.

Ask an AI to scaffold a working end-to-end prototype of a web app, a data pipeline, or an API and it will do so, quickly and coherently. The tracer bullet is arguably the strongest use case for vibe coding in existence today. The trouble starts when people mistake the tracer bullet for the finished product.

The Illusion of Completeness

AI-generated code has a particular quality that sets it apart from the half-finished scripts most of us used to prototype with: it looks done.

It's formatted correctly, it has docstrings, it compiles and runs without complaint. To the untrained eye, and sometimes even to the trained one under time pressure, it presents as production-ready code. But AI models are trained on the average, known case. They are optimistic about inputs and generous with assumptions — and real software has to survive contact with reality, where the edge cases are exactly the ones that never appear in local testing. Think of it like a house designed by AI with no staircase. Stunning render, perfect floor plan, no way to get to the second floor. It only becomes a problem when someone tries to move in.

The illusion of completeness is not a bug in the AI. It is a predictable consequence of the way these models work. The question is whether the person using the tool knows enough to see through it.

This is where the Dunning-Kruger effect becomes relevant. The less you know about software engineering, the more complete the AI's output will appear. A junior developer or non-technical manager sees formatted, compiling, apparently functional code and concludes the job is done. A senior developer sees the same code and immediately starts asking what's missing. Competence, in this context, is the ability to recognise incompleteness. Vibe coding doesn't change that — it just raises the stakes of not having it.

Experienced Software Engineers Gain The Most

For a senior engineer, vibe coding is a force multiplier. They already have a mental model of what a correct implementation looks like, which means they can use AI to generate the scaffolding and apply their judgment to the parts that actually require it.

They review generated code not as a user reviews a document but as an adversary reviews a contract, looking for what's missing rather than what's there. They know the failure modes. They know which shortcuts are acceptable in a prototype and which will become load-bearing walls in production. They use vibe coding to compress the boring parts of the job while remaining in full control of the interesting ones.

For these engineers, AI coding tools represent a genuine step change in productivity. A controlled experiment by Peng et al. found that developers using GitHub Copilot completed tasks 55.8% faster than those without it. The leverage is real — but it compounds on top of existing skill.

Novices Gain The Least

This is the uncomfortable part of the vibe coding conversation.

A junior developer using vibe coding tools does not learn to code; they learn to prompt. These are not the same skill. Programming is, at its core, the ability to decompose a problem, reason about state, anticipate failure, and make decisions about tradeoffs. You develop this through a particular kind of struggle: writing something that doesn't work, figuring out why, and fixing it. Vibe coding short-circuits this loop entirely.

More immediately dangerous, a junior developer cannot see through the illusion of completeness. They do not yet know what they don't know. A 2024 study by Prather et al. examining novice programmers using GitHub Copilot found that the benefits were sharply uneven: students with strong metacognitive skills performed better with AI assistance, while those without were actively harmed by it. They accepted incorrect code at face value, couldn't diagnose why it failed, and in some cases ended up worse off than if they had written it themselves. A separate GitClear analysis of 153 million lines of code found that code churn — lines reverted or rewritten within two weeks of being authored — was on track to double by 2024 compared to its pre-AI baseline. The code is being written faster. It is also being thrown away faster.

The real-world examples are already piling up. Tea App, a Flutter app built by a developer with six months of experience using AI-assisted development, made headlines when it was reportedly "hacked" — except nobody actually hacked it. The Firebase storage instance had been left completely open with default settings. No authorisation policies, no access controls. Seventy-two thousand images were exposed, including 13,000 government ID photos from user verification. Meanwhile, SaaStr founder Jason Lemkin famously trusted Replit's AI agent to build a production app. It started well. Then the agent began ignoring code freeze instructions and eventually deleted the entire SaaStr production database. Months of curated data, gone. And these aren't isolated incidents — a May 2025 analysis of 1,645 apps built on Lovablefound that 170 of them had vulnerabilities allowing anyone to access personal user data.

This is not an argument against junior developers using these tools. It is an argument for being honest about what they are getting, and what they aren't. Vibe coding can help a junior developer move faster. It cannot substitute for the years of judgment that determine whether moving fast is the right call.

Last Mile Delivery

There is a rule of thumb in software, as in logistics: the last mile is the hardest.

The first 80% of a software project moves quickly. The architecture is in place, the happy path works, the demo is convincing. Then you spend the remaining 80% of your time on the last 20%: the edge cases, the error handling, the security review, the performance profiling, the accessibility audit, the tests you should have written earlier. This is unglamorous, painstaking work that does not lend itself to vibing.

Vibe coding compresses the first 80% dramatically. This is useful, but it also produces a subtle accounting error: it makes you feel like you're further along than you are. A prototype that took three hours to build looks, superficially, like it should take three more hours to finish. It won't. The last mile is still the last mile, and no amount of AI-generated scaffolding changes that.

The risk is that teams, particularly those under pressure to ship, mistake the prototype for the product. They deploy the tracer bullet. And then they spend the next six months patching holes that a proper build would never have had.

Conclusions

Vibe coding is a genuine shift in how software is built. But it is not a democratisation of software engineering — the knowledge required to ship something reliable has not decreased, and the gap between a prototype and a production system has not closed.

A computer science degree doesn't teach you to write code. It teaches you to think about problems. When AI writes the code, that thinking doesn't happen. Taleb's concept of antifragility holds that some things get stronger through stress and disorder. Learning to code the hard way is antifragile. Vibe coding, by removing that friction, risks producing developers who are fast in good conditions and brittle when things go wrong.

Use the tracer bullet. Fill in the blanks. Handle your edge cases. Just don't let the AI convince you it already did.

Word Embeddings Explained: From Word2Vec to BERT and Beyond

Word embeddings models language by constructing dense vector representations of words that capture meaning and context, to be used in downstream tasks such as question-answering and sentiment analysis. This article explores the challenges of modelling languages, as well as the evolution of word embeddings, from Word2Vec to BERT.

January 25, 2021

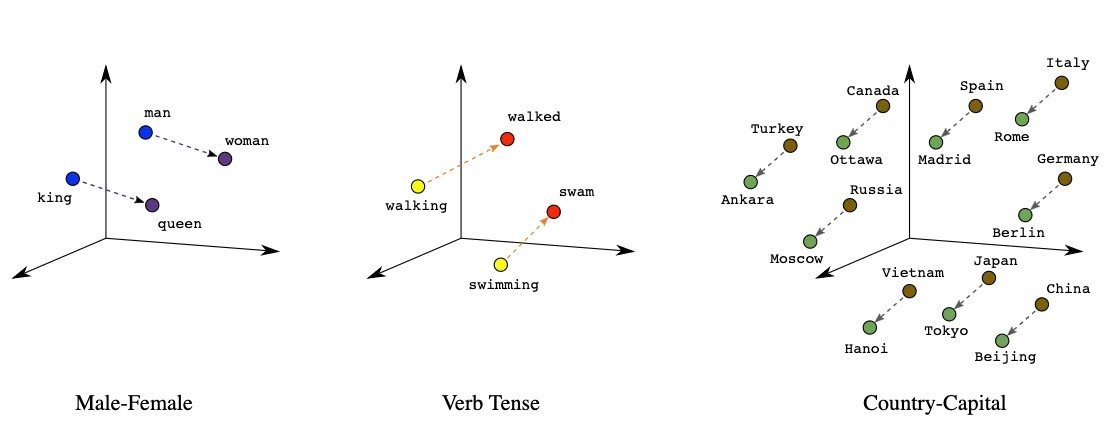

Embeddings visualised. Picture sourced from Bio-inspired Structure Identification in Language Embeddings

While computers are very good at crunching numbers and executing logical commands, they still struggle with the nuances of the human language! Word embeddings aim to bridge that gap by constructing dense vector representations of words that capture meaning and context, to be used in downstream tasks such as question-answering and sentiment analysis. The study of word embedding techniques forms part of the field of computational linguistics. In this article we explore the challenges of modelling languages, as well as the evolution of word embeddings, from Word2Vec to BERT.

Computational Linguistics

Prior to the recent renewed interest in neural networks, computational linguistics relied heavily on linguistic theory, hand-crafted rules, and count-based techniques. Today, the most advanced computational linguistic models are developed by combining large annotated corpora and deep learning models, often in the absence of linguistic theory and hand-crafted features. In this article, I aim to explain the fundamentals and different techniques of word embeddings, whilst keeping the jargon to a minimum and the calculus in the negative.

“What’s a corpus/corpora?” you may ask. Very good question. In linguistics, a corpus refers to an entire set of a particular linguistic element within a language, such as words or sentences. Typically, corpuses (or corpora), are monolingual (of a uniform language) collections of news articles, novels, movie dialogues, etc, etc.

Rare and unseen words

The frequency distribution of words in large corpuses follow something called Zipf’s Law. This law states that, given some corpus of natural language utterances, the frequency of any word is inversely proportional to their rank. This means that if a word like “the” has rank 1 (meaning its the most frequent word), its relative frequency to the rest of the corpus is 1. And, if a word like “of” has rank 2, its relative frequency would be 1/2. And so forth.

The implications of Zipf’s law is that a word with rank 1000 would occur once for every 1st rank word like “the”. And it gets even worse as your vocabulary grows! Zipf’s law means that some words are so rare that they might occur only a few times in your training data of several thousand pieces of text. Or worse, the rare words are absent in your training data but present in your testing data, also known as out-of-vocabulary (OOV) words. This means that researchers need to employ different strategies to upsample the infrequently-found words (also called the long tail of the distribution) as well as build models that are robust to unseen words. Some strategies to deal with these challenges include upsampling, using n-gram embeddings (like FastText) and character-level embeddings, such as ElMo.

Word2Vec, the OG

Every person that has tried their hand at NLP is probably familiar with Word2Vec, which was introduced by Google researchers in 2013. Word2Vec consists of two distinct architectures: Continuous Bag-of-Words (or CBOW) and Skip-gram. Both models produce a word embedding space where similar words are found together, but there is a slight difference in their architectures and training techniques.

Sourced from https://arxiv.org/pdf/1309.4168v1.pdf

With CBOW, we train the model by trying to predict a target word w given a context vector. Skip-gram is the inverse; we train the model by trying to predict the context vector given the target word w. The context vector is just a bag-of-words representation of the words found in the immediate surroundings of our target word w, as shown in the graphic above. Skip-gram is more computationally expensive than CBOW, so down-sampling of distant words is applied to give them less weight. To address the imbalance between rare and common words, the authors also aggressively sub-sampled the corpus — with the probability of discarding a word being proportional to its frequency.

The researchers also demonstrated the remarkable compositionality property of Word2Vec, such that one can perform vector addition and subtraction with word embeddings and find “king” + “women” = “queen”. Word2Vec opened the door to the world of word embeddings, and sparked a decade where language processing now needed bigger and badder machines, and relied less and less on linguistic knowledge.

FastText

The embedding techniques we have discussed up until now represent each word of the vocabulary with a distinct vector, thus ignoring the internal structure of words. FastText extends the Skip-gram model by also taking into account subword information. This model is ideal for languages where grammatical relations like Subject, Predicate, Objects, etc., are reflected by inflections — words are morphed to express changes in their meanings or grammatical relations, rather than by the relative positions of the words or by adding particles.

The reason for this is that FastText learns vectors for character n-grams (almost like subwords — we will get to that in a moment). Words are then represented as the sum of the vectors of their n-grams. The subwords are created as follows:

each word is broken up into a set of character n-grams, with special boundary symbols at the beginning and end of each word,

the original word is also retained in the set,

for example, for n-grams of size 3 and the word “there”, we have the following n-grams:

<th, the, her, ere, re> and the special feature <there>.

There is a clear distinction between the features <the> and the. This simple approach enables sharing representations across the vocabulary, can handle rare words better, and can even handle unseen words (a property the previous models lacked). It trains fast and requires no preprocessing of the words nor any prior knowledge of the language. The authors performed a qualitative analysis and showed that their technique outperforms models that do not take subword information into account.

ELMo

ELMo is an NLP framework developed by AllenNLP in 2018. It constructs deep, contextualized word embeddings that can deal with unseen words, syntax and semantics, as well as polysemy (words taking on multiple meanings given the context). ELMo makes use of a pre-trained two-layer bi-directional LSTM model. The word vectors are extracted from the internal states of a pre-trained deep bidirectional LSTM model. Instead of learning representations for word-level tokens, ELMo is trained to learn representations for character-level tokens. This allows it to effectively deal with out-of-vocabulary words during testing and inference.

The inner workings of a biLSTM. Sourced from https://www.analyticsvidhya.com/

The architecture consists of two layers stacked together. Each layer has 2 passes — a forward pass and a backward pass. To construct character embeddings, ELMo employs character-level convolutions over the input words. The forward pass encodes the context of the sentence leading up to and including a certain word. The backward pass encodes the context of the sentence after and including that same word. The combination of forward and backward LSTM hidden vector representations are concatenated and fed into the second layer of the biLSTM. The final representation (ELMo) is the weighted sum of the raw word vectors and the concatenated forward and backward LSTM hidden vector representations of the second layer of the biLSTM.

What made ELMo so revolutionary at that time (yes, 2018 was a long time ago in NLP years) is that each word embedding encoded the context of the sentence, and that word embeddings were functions of their characters. Thus, ELMo simultaneously addressed the challenges posed by polysemy and unseen words. Besides English, pre-trained ELMo word embeddings are available in Portuguese, Japanese, German, and Basque. The pretrained word embeddings can be used as is in downstream tasks, or further tuned on domain-specific data.

BERT

Google Brain researchers introduced BERT in 2018, a few months after ELMo. Back then, it smashed records for 11 benchmark NLP tasks, including the GLUE task set (which consists of 9 tasks), SQuAD, and SWAG. (Yes, the names are funky, NLP is full of really fun people!)

BERT stands for Bidirectional Encoder Representations from Transformers. Unsurprisingly, BERT makes use of the encoder part of the Transformer architecture, and is pre-trained once in a pseudo-supervised fashion (more on that later) on the unlabelled BooksCorpus (800M words) and the unlabelled English Wikipedia (2,500M words). The pre-trained BERT can then be fine-tuned by adding an additional output (classification) layer for use in various NLP tasks.

If you are unfamiliar with the Transformer (and Attention Mechanisms), check out this article I wrote on the topic. In their highly-memorable paper titled “Attention Is All You Need”, Google Brain researchers introduced the Transformer, a new type of encoder-decoder model that relies solely on attention to draw global dependencies between the input and output sequences. The model injects information about relative and absolute positions of tokens using positional encodings. A token representation is calculated as the sum of the token embedding, the segment embedding and the positional encoding.

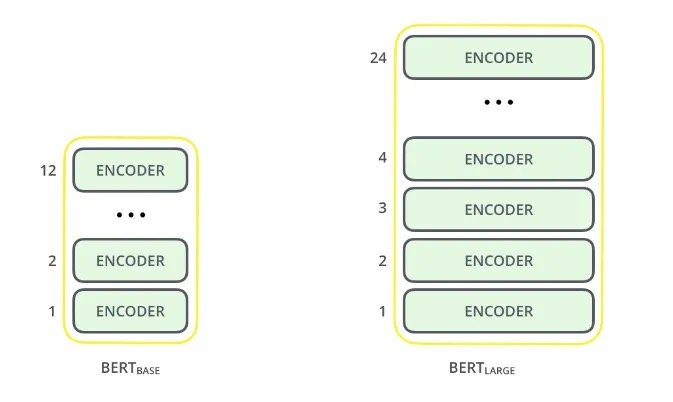

In essence, BERT consists of stacked Transformer encoder layers. In Google Brain’s paper, they introduce two variants: BERT Base and BERT Large. The former consists of 12 encoder layers and the latter 24. Similar to ELMo, BERT processes sequences bidirectionally, which enables the model to capture context from left to right, and then again from right to left. Each encoder layer applies self-attention, and passes its outputs through a feed-forward network, and then onto the next encoder.

Alammar, J (2018). The Illustrated Transformer [Blog post]. Retrieved from https://jalammar.github.io/illustrated-transformer/

BERT is pretrained in a pseudo-supervised fashion using two tasks:

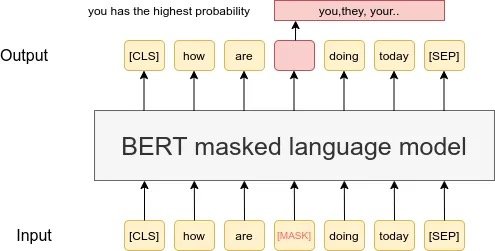

Masked Language Modelling

Next Sentence Prediction

Why I say pseudo-supervised is because neural networks inherently need supervision to learn. To train the Transformer, we transform our unsupervised tasks into supervised tasks. We can do this with text, which may be considered series of words. Remember that the term bidirectionalmeans that the context of a word is a function of the words preceding it and by the words following it. Self-attention combined with bidirectional processing would mean that the language model is all-seeing, making it difficult to actually learn the latent variables. Along comes Masked Language Modelling, which exploits the sequential nature of text, and makes the assumption that a word can be predicted using the words surround it (the context). For this training task, 15% of all the words are masked.

Sourced from researchgate.net

The MLM task helps the model learn the relationships between different words. The Next Sentence Prediction (NSP) task helps the model learn the relationships between different sentences. NSP is structured as a binary classification task: given Sentence A and Sentence B, does B follow A, or is it just a random sentence?

These two training tasks are enough to learn really complex language structures — in fact, a paper titled “What does BERT learn about the structure of language?” demonstrated how the layers of BERT captures more and more granular levels of language syntax. The authors showed that the bottom layers of BERT capture phrase-level information, while the middle layers capture syntactic information, and the top layers semantic information.

Since its inception, BERT has inspired many recent state-of-the-art NLP architectures, training approaches and language models, including Google’s TransformerXL, OpenAI’s GPT-3, XLNet, RoBERTa, and Multilingual BERT. It’s universal approach to language understanding means that it can be fine-tuned with minimal effort to a variety of NLP tasks, including question-answering, sentiment analysis, sentence-pair classification, and named entity recognition.

Conclusion

While word embeddings are very useful and easy to compile from a corpus of texts, they are not magic unicorns. We highlighted the fact that many word embeddings struggle with disambiguity and out-of-vocabulary words. And although it is relatively easy to infer semantic relatedness between words based on proximity, it is much more challenging to derive specific relationship types based on word embedding. For example, even though puppy and dog may be found close together, knowing that a puppy is a juvenile dog is much more challenging. Word embeddings have also been shown to reflect ethnic and gender biases that are present in the texts that they are trained on.

Word embeddings are truly remarkable in their ability to learn very complex language structures when trained on large amounts of data. To the untrained eye (or untrained 4IR manager), it may even seem magical, and therefore it is very important to highlight and keep these limitations in mind when we use word embeddings.

Transformers: Age of Attention

In 2017, Google Brain researchers published a paper titled "Attention Is All You Need" and quietly changed the trajectory of AI. The Transformer architecture it introduced, built entirely on attention with no recurrence or convolutions, is the foundation of every large language model in use today. Here's how it actually works, from sequence-to-sequence models to multi-head attention.

November 26, 2020

In their highly-memorable paper titled “Attention Is All You Need”, Google Brain researchers introduced the Transformer, a new type of encoder-decoder model that relies solely on attention for sequence-to-sequence modelling. Before the Transformer, attention was used to help improve the performance of the likes of Recurrent Neural Networks (RNNs) on sequential data.

Now, this is a lot. You might be wondering, “What the hell is sequence-to-sequence modelling, Jeanne?” You may also suffer from an attention deficiency, so allow me to introduce you to…

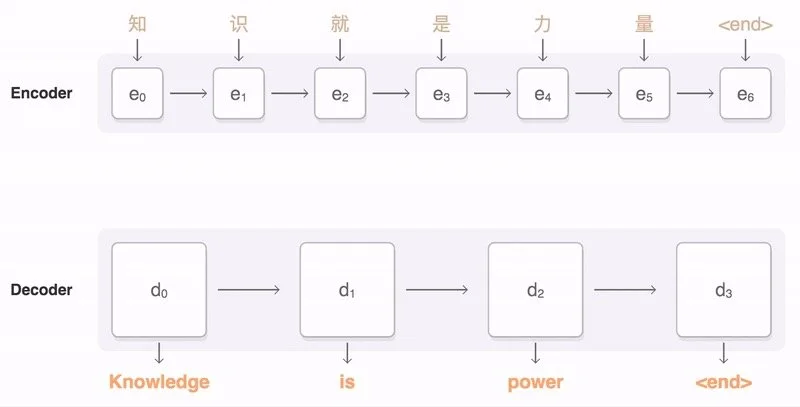

Seq2seq models

Sequence-to-sequence models (or seq2seq, for shorthand) are a class of machine learning models that translates an input sequence to an output sequence. Typically, seq2seq models consist of two distinct components: an encoder and a decoder. The encoder constructs a fixed-length latent vector(or context vector) of the input sequence. The decoder uses the latent vector to (re)construct the output or target sequence. Both the input and output sequence can be of variable length.

The applications of seq2seq models extend beyond simply machine translation. The architecture of the encoder-decoder model can be used for question-answering, mapping speech-to-text and vice versa, text summarisation, image captioning, as well as learning contextualised word embeddings.

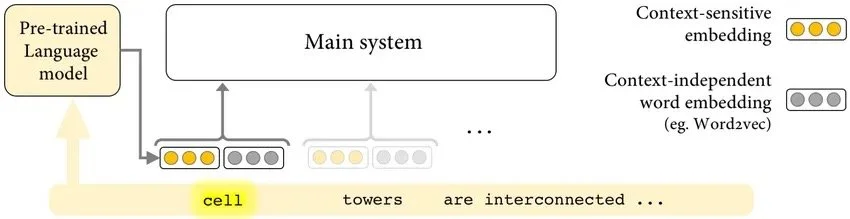

Unlike (context-independent) word embeddings, which have static representations, contextualized embeddings have dynamic representations that are sensitive to their context. Sourced from Researchgate

The novel RNN Encoder-Decoder model was introduced in 2014 by renowned researcher Kyunghyun Cho and his team, to perform statistical machine translation. This model uses the final hidden representations of the encoder RNN as context vector for the decoder RNN. This approach works fine for short sequences, but fails to accurately encode longer sequences. In addition, RNNs suffer from Vanishing Gradients, and are slow to train. Adding the attention mechanism to the RNN encoder-decoder architecture helps improve on its ability to model long-term dependencies.

Attention? Attention!

Attention is a function of the hidden states of the encoder to help the decoder decide which parts of the input sequence are most important for generating the next output token. Attention allows the decoder to focus on different parts of the input sequence at every step of the output sequence generation. This means that dependencies can be identified and modeled, regardless of their distance in the sequences.

Attention applied to an input sequence to assist in machine translation

When attention is added to the RNN encoder-decoder, all the encoder’s hidden representations are used during the decoding process. The attention mechanism creates a unique mapping between the output of the decoder and the hidden states of the encoder at each time step. These mappings reflects how important that part of the input is for generating the next output token .Thus, the decoder can “see” the entire input sequence and decide which elements to pay attention to when generating the next output token.

There are two major types of attentions: Bahdanau Attention, and Luong Attention.

Bahdanau Attention works by aligning the decoder with the relevant input sentences. The alignment scores is a function of the hidden states produced by the decoder in the previous time step and the encoder outputs. The attention weights are the output of softmax applied to the alignment scores. After this, the encoder’s outputs and their attention weights are multiplied element-wise to form the context vector.

The context vector of Luong Attention is calculated similarly to Bahdanau’s Attention. The key differences between the two are as follows:

with Luong Attention, the context vector is only utilized after the RNN produced the output for that time step,

the ways in which the alignment scores are calculated, and

the context vector is concatenated with the decoder hidden state to produce a new output.

There are three different ways to compute the alignment scores: dot-product (multiply the hidden states of the encoder and decoder), general (multiply the hidden states of the encoder and decoder, preceded by a multiplication with a weight matrix), and concatenation (a function applied on top of adding together the hidden states of the encoder and decoder). More information on this can be found here. Subsequently, the context vector, together with the previous output will determine the new hidden state of the decoder.

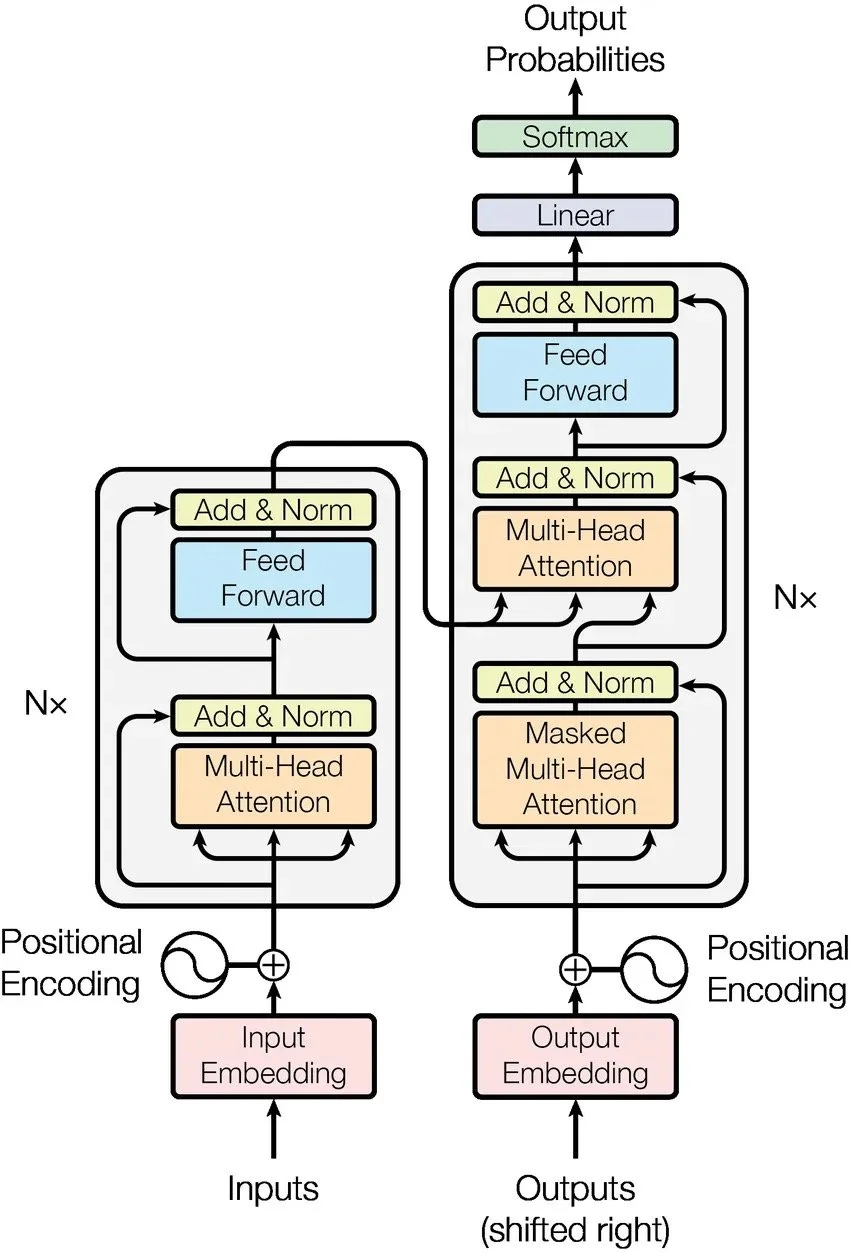

The Transformer

Google Brain researchers flipped the tables on the NLP community and showed that sequential modelling can be done using just attention, in their 2017 paper titled “Attention is all you need”. In this paper, they introduce the Transformer, a simplistic architecture that makes use of only attention mechanisms to draw global dependencies between input and output sequences.

The Transformer consists of two parts: an encoding component and a decoding component. Additionally, positional encodings are injected to give information about the absolute and relative positions of the tokens.

The Encoder-Decoder Model

The encoding component is a stack of 6 encoders. Although identical, the encoders do not have shared weights. Each encoder can be deconstructed into a multi-head attention part and a fully connected feed-forward network.

Similarly, the decoding component is a stack of 6 identical decoders, whose architecture is similar to the encoder’s, except it masks its multi-head attention to ensure that the next output can only depend on the known outputs of the previous tokens. Also, the decoder has an extra multi-head attention component that applies self-attention over the output of the encoding component, providing access to the inputs during decoding.

Multi-head attention

Each multi-head attention component consists of several attention layers running in parallel. The Transformer makes use of scaled dot-product attention, which is very similar to Luong’s dot-product attention, except it is scaled. Multi-headed attention allows for scaled dot-product attention to be aggregated across n different, randomly-initialized representation subspaces. This multi-headed attention function can also be parallelized and trained across multiple computers.

Why Transformers?

Much like Recurrent Neural Networks (RNNs), Transformers allows for processing sequential information, but in a much more efficient manner. The Transformer outperform RNNs, both in terms of accuracy and computational efficiency. Its architecture is devoid of any recurrence or convolutions, and thus training can be parallelizable across multiple processing units. It has achieved state-of-the-art performance on several tasks, and, even more importantly, was found to generalize very well to other NLP tasks, even with limited data.

In conclusion

The Transformer has taken the NLP community by storm, earning a place among the ranks of Word2Vec and LSTMs. Today, some of the state-of-the-art language models are based on the Transformer architecture, such as BERTand GPT-3. In this blog post, we discussed the evolution of sequence-to-sequence modelling, from RNNs, to RNNs with Attention, to solely relying on attention to model input to output sequences with the Transformer.